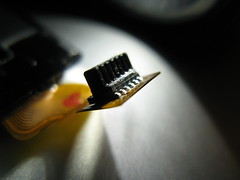

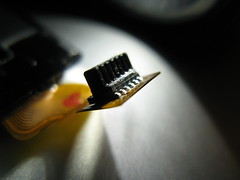

This picture is of a Pentium Classic CPU core I removed from it's ceramic packaging.

Here's how I did it. I put the CPU in a vice very carefully and used a fresh, sharp razor blade and hammered it to cleave off all the pins. This looks nice, but it messy to clean up. However, it's needed for the next step.

I then took the razor blade and hammered it between the gold plate covering the bottom of the CPU and the ceramic it's glued it. If you're very careful, you can do this without harming the CPU core inside.

Once that's off you've got a nicely exposed core. However, it's still attached to the ceramic behind the CPU.

I wish I could tell you how it did it, but I can't. It just popped off one day. Since the ceramic was cracked and smashed behind it, there was a tiny bit of core exposed. I guess that was all that was needed. Perhaps I'll get a boatload more someday and try to see how to pop out the core without cracking or annihilating it.

For now, just having a collection of 386, 486, and 586 core's exposed is great!

Also, the Flickr to Google blogger tool works well, as I used it to make this post. But you probably knew that.

Wednesday, December 31, 2008

DeoxIT FaderLube - Really Works!

For years I've been fixing and trying to fix lots of everything. I'm that guy that people bring their broken stuff to.

However, there's one thing I never could get quite right. Volume controls, and other pot (potentiometer) based controls. I'd manage to get the crackle out, but if always came back within a short while. I'd even take the entire pot apart, clean each piece manually, and put it all back together. But even then, it was never quite right.

I tried WW-40 but that's stinky and yucky and generally not the best idea. I'd try 99% Isopropyl alcohol and that would work for a while, but generally not long. I even went to Radio Shack and got their "Tuner Cleaner" or whatever it's called (they also have a "Contact Cleaner") but that never seemed to do the trick either. In fact, it left a greezy film on the board, which was almost unavoidable since the spray can would go off like a rocket and hose down just about everything in the immediate area of the room (walls, floors, your face, whatever).

I even tried a few electronics cleaners from Canadian Tire, but without any better results. Everything barely worked.

For a while, before playing a game of Super Breakout on the Atari VCS, I'd take the big knob off and drop a few dips of alcohol into it, which would last only as long as the game. Plus, since my cleaning process removed the original lubrication of the pot, it would turn as smoothly. You can see the effect of all this while playing the game, the player paddle at the bottom of the screen would wiggle and wobble and generally react jerky and poorly during gameplay.

I've been reading a lot of online forums and blogs and whatnot, when I finally started reading about the DeoxIT line of products. They seemed great, and I finally managed to get my hands on some.

It's truly and wonderfully great. It's exactly right. It cleans and lubricates and lasts. You can see the difference in the video game controllers onscreen, and you can hear the difference in the volume controls. It's amazing. When compared to all the other crap I used, it simply kicks everything else in the butt. It's as great as everyone on the internet says it is. It lives up to the hype. I wish I had this stuff years ago. I want to treat everything in the house I have with it. It's really that great.

Here's a picture of the stuff I've used it on so far with great results.

I fixed a flaky Atari joystick, Atari paddles, and guitar plugs, pots and switches so far.

Plus, the spray nozzle at the top has a selector for how hard you want the spray to come out.

This is a great feature, since I figure I waste about %50 of and entire bottle of the old junk I used just because it hosed down everything in sight with one burst.

If you really want to make it last without wasting it, you can try what I like. I get an old bottle of eye drops, nasal spray (remove the hose inside to keep it from spraying up), or whatever small bottle that will let you drip it out, clean it and carefully fill it from the spray can. Now you can use a few drops when you need to, and wash out a pot in a hard to reach spot with the can if needed, and minimize waste. What's good for the environment is usually good for your wallet.

I wasn't paid to promote this. I believe it's a great product and it helps restore and repair electronics so you can lengthen their life and stretch your dollar.

Having said that, it's not cheap. You can order a diluted solution F5 can like mine from their website.

DeoxIT FaderLube at Caig.com

They also have 100% pure stuff in a tiny tube and squeeze bottle. It's actual pure lubricant and drips out thick and slow like honey.

It's great. Gets the crackle out. Honestly wonderful stuff.

However, there's one thing I never could get quite right. Volume controls, and other pot (potentiometer) based controls. I'd manage to get the crackle out, but if always came back within a short while. I'd even take the entire pot apart, clean each piece manually, and put it all back together. But even then, it was never quite right.

I tried WW-40 but that's stinky and yucky and generally not the best idea. I'd try 99% Isopropyl alcohol and that would work for a while, but generally not long. I even went to Radio Shack and got their "Tuner Cleaner" or whatever it's called (they also have a "Contact Cleaner") but that never seemed to do the trick either. In fact, it left a greezy film on the board, which was almost unavoidable since the spray can would go off like a rocket and hose down just about everything in the immediate area of the room (walls, floors, your face, whatever).

I even tried a few electronics cleaners from Canadian Tire, but without any better results. Everything barely worked.

For a while, before playing a game of Super Breakout on the Atari VCS, I'd take the big knob off and drop a few dips of alcohol into it, which would last only as long as the game. Plus, since my cleaning process removed the original lubrication of the pot, it would turn as smoothly. You can see the effect of all this while playing the game, the player paddle at the bottom of the screen would wiggle and wobble and generally react jerky and poorly during gameplay.

I've been reading a lot of online forums and blogs and whatnot, when I finally started reading about the DeoxIT line of products. They seemed great, and I finally managed to get my hands on some.

It's truly and wonderfully great. It's exactly right. It cleans and lubricates and lasts. You can see the difference in the video game controllers onscreen, and you can hear the difference in the volume controls. It's amazing. When compared to all the other crap I used, it simply kicks everything else in the butt. It's as great as everyone on the internet says it is. It lives up to the hype. I wish I had this stuff years ago. I want to treat everything in the house I have with it. It's really that great.

Here's a picture of the stuff I've used it on so far with great results.

I fixed a flaky Atari joystick, Atari paddles, and guitar plugs, pots and switches so far.

Plus, the spray nozzle at the top has a selector for how hard you want the spray to come out.

This is a great feature, since I figure I waste about %50 of and entire bottle of the old junk I used just because it hosed down everything in sight with one burst.

If you really want to make it last without wasting it, you can try what I like. I get an old bottle of eye drops, nasal spray (remove the hose inside to keep it from spraying up), or whatever small bottle that will let you drip it out, clean it and carefully fill it from the spray can. Now you can use a few drops when you need to, and wash out a pot in a hard to reach spot with the can if needed, and minimize waste. What's good for the environment is usually good for your wallet.

I wasn't paid to promote this. I believe it's a great product and it helps restore and repair electronics so you can lengthen their life and stretch your dollar.

Having said that, it's not cheap. You can order a diluted solution F5 can like mine from their website.

DeoxIT FaderLube at Caig.com

They also have 100% pure stuff in a tiny tube and squeeze bottle. It's actual pure lubricant and drips out thick and slow like honey.

It's great. Gets the crackle out. Honestly wonderful stuff.

Tuesday, December 30, 2008

iPod Mini Repearing - Soldering an SMD connector on a ribbon cable

I recently tried fixing an iPod Mini. It's not entirely simple. The hard part is getting the unit apart. There's two main pieces that go in when the manufacture the device. The first is the main board with battery and screen. The second is the thumb wheel keypad. They must first slide that in, then the main board, then finally connect the two.

The problem is, the connector is a very tiny surface mount device on a ribbon connector, so when you try to unplug it, since you can't get a screwdriver in there, you end up ripping the flat ribbon wire away from connector while it's still plugged in.

I've dealt with this kind of thing before, and since it happened again I came up with another way of fixing it which I'd thought I'd share for anyone who might be interested.

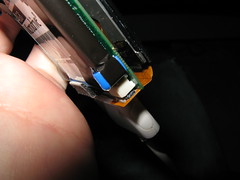

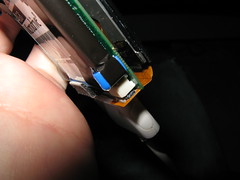

In the first picture you see the leg of the connector separated from the ribbon wire.

The second leg ended up getting pulled off the plastic ribbon as well, but the trace was still connected to the leg so it's fine. I used a little super glue (crazy glue) to try and keep things together a bit better. I was afraid I might end up insulating the wire but that didn't end up being an issue.

I tired some rear window defroster paint to connect the leg to the trace, but it didn't have any grip on anything so it wasn't an option.

Here's what I did. Since I haven't yet Dremeled a fine point on my soldering iron tip for SMD work, I didn't want to use the soldering iron. Based on past experience, I've noticed that it's too easy to overheat the ribbon connector and melting the whole thing.

Step 1 - I used a pair of pliers to hold the part down. I didn't want to use my vice as it would probably scratch or break the part.

Step 2 - I wrapped copper wire around some tweezers and make coil in the middle of the wire coming off of the end of the tweezers.

Step 3 - I then cut a tiny piece of solder and placed it carefully beside the leg of the connector.

Here's a picture of solder piece.

Step 4 - I used a butane mini-soldering torch to heat the copper wire coil until it and the end of the wire was red hot.

Step 5 - I placed the tip of the hot wire against the piece of solder and leg until it melted and fused with the leg and ribbon trace.

Step 6 - Pulled away as soon as the solder was flowing.

Here's a picture of the setup, complete with glowing hot copper wire.

And here's a picture of the result.

Now, why would someone want to do this instead of another solution? Well, I noticed that the copper wire at the tip cools down very quickly, so it's harder to over heat the ribbon. Since the tip isn't a fine point narrowing from a larger shaft, there also probably isn't a large amount of heat coming off the rest of a soldering iron.

I also believe that, while heated the copper wire gets **much** hotter than the soldering iron. So, you've got the ability to heat a tiny part up to a very high temperature, in a very tiny area, with the ability to pull away and let it cool down fairly quickly as well, which gets the solder flowing, but then lets you stop things before they get too hot.

Here's the final part, repaired and plugged into the iPod Mini.

So, if you rip this sucker apart, maybe this might help. Although you just can't beat a temperature controlled and finely tipped soldering iron for SMD work.

:)

The problem is, the connector is a very tiny surface mount device on a ribbon connector, so when you try to unplug it, since you can't get a screwdriver in there, you end up ripping the flat ribbon wire away from connector while it's still plugged in.

I've dealt with this kind of thing before, and since it happened again I came up with another way of fixing it which I'd thought I'd share for anyone who might be interested.

In the first picture you see the leg of the connector separated from the ribbon wire.

The second leg ended up getting pulled off the plastic ribbon as well, but the trace was still connected to the leg so it's fine. I used a little super glue (crazy glue) to try and keep things together a bit better. I was afraid I might end up insulating the wire but that didn't end up being an issue.

I tired some rear window defroster paint to connect the leg to the trace, but it didn't have any grip on anything so it wasn't an option.

Here's what I did. Since I haven't yet Dremeled a fine point on my soldering iron tip for SMD work, I didn't want to use the soldering iron. Based on past experience, I've noticed that it's too easy to overheat the ribbon connector and melting the whole thing.

Step 1 - I used a pair of pliers to hold the part down. I didn't want to use my vice as it would probably scratch or break the part.

Step 2 - I wrapped copper wire around some tweezers and make coil in the middle of the wire coming off of the end of the tweezers.

Step 3 - I then cut a tiny piece of solder and placed it carefully beside the leg of the connector.

Here's a picture of solder piece.

Step 4 - I used a butane mini-soldering torch to heat the copper wire coil until it and the end of the wire was red hot.

Step 5 - I placed the tip of the hot wire against the piece of solder and leg until it melted and fused with the leg and ribbon trace.

Step 6 - Pulled away as soon as the solder was flowing.

Here's a picture of the setup, complete with glowing hot copper wire.

And here's a picture of the result.

Now, why would someone want to do this instead of another solution? Well, I noticed that the copper wire at the tip cools down very quickly, so it's harder to over heat the ribbon. Since the tip isn't a fine point narrowing from a larger shaft, there also probably isn't a large amount of heat coming off the rest of a soldering iron.

I also believe that, while heated the copper wire gets **much** hotter than the soldering iron. So, you've got the ability to heat a tiny part up to a very high temperature, in a very tiny area, with the ability to pull away and let it cool down fairly quickly as well, which gets the solder flowing, but then lets you stop things before they get too hot.

Here's the final part, repaired and plugged into the iPod Mini.

So, if you rip this sucker apart, maybe this might help. Although you just can't beat a temperature controlled and finely tipped soldering iron for SMD work.

:)

Monday, June 30, 2008

Comparing Mac2Sell with a real life eBay auction

I recently came across a site that apparently tells you how much your used Apple Mac computer is worth:

http://www.mac2sell.net/

However, rather than taking it at face value, I instead did an http://www.ebay.ca search and found a recently ending Mac laptop for sale in Canada.

Here are the details of the auction:

MacBook Pro 1.83GHz 15" screen 2GB RAM 80GB HDD Intel Core Duo MA463LL/A

Item Location: Calgary, Alberta, Canada

26 bids

Winning bid: $1,147.56 CAD

Shipping to Canada: $35.64 CAD

Total: $1,183.20 CAD

Here are the results of a www.mac2sell.net search:

MacBook Pro 15 inch Intel Core Duo 1.83 GHz 2048 / 80 GB / superdrive

The Mac2Sell Quoted Value is

Total: $1,190.00 CAD

Difference: 99.43%

Although taking only one sample isn't exactly science, after doing a few other searches I found that the site is good at giving back numbers I'd be expecting. It seems pretty much bang on to me.

So the next time you want to pickup a Mac computer on ebay, instead of getting caught up in a bidding war and maybe paying too much, you could simply do a Mac2Sell search and set that as your maximum bid and see what happens. Worst case, you know you haven't overpaid in the heat of bidding.

Or if it's http://www.craigslist.org you're searching, now you've got a great reference tool for both buying and selling.

Cool.

http://www.mac2sell.net/

However, rather than taking it at face value, I instead did an http://www.ebay.ca search and found a recently ending Mac laptop for sale in Canada.

Here are the details of the auction:

MacBook Pro 1.83GHz 15" screen 2GB RAM 80GB HDD Intel Core Duo MA463LL/A

Item Location: Calgary, Alberta, Canada

26 bids

Winning bid: $1,147.56 CAD

Shipping to Canada: $35.64 CAD

Total: $1,183.20 CAD

Here are the results of a www.mac2sell.net search:

MacBook Pro 15 inch Intel Core Duo 1.83 GHz 2048 / 80 GB / superdrive

The Mac2Sell Quoted Value is

Total: $1,190.00 CAD

Difference: 99.43%

Although taking only one sample isn't exactly science, after doing a few other searches I found that the site is good at giving back numbers I'd be expecting. It seems pretty much bang on to me.

So the next time you want to pickup a Mac computer on ebay, instead of getting caught up in a bidding war and maybe paying too much, you could simply do a Mac2Sell search and set that as your maximum bid and see what happens. Worst case, you know you haven't overpaid in the heat of bidding.

Or if it's http://www.craigslist.org you're searching, now you've got a great reference tool for both buying and selling.

Cool.

Tuesday, June 03, 2008

Time Machine ready to roll

After watching this YouTube video,

http://www.youtube.com/watch?v=oRWwI61so5Q&NR=1

I have proceeded to build my own time machine, and I have switched it one and already have my emails from the future.

It turns out that, in the future, gas prices are still high, and people are still douchbags.

http://www.cbc.ca/consumer/story/2008/05/27/ot-gas-080527.html

Also, no space cards yet.

http://www.glumbert.com/media/spacecar

Damn.

http://www.youtube.com/watch?v=oRWwI61so5Q&NR=1

I have proceeded to build my own time machine, and I have switched it one and already have my emails from the future.

It turns out that, in the future, gas prices are still high, and people are still douchbags.

http://www.cbc.ca/consumer/story/2008/05/27/ot-gas-080527.html

Also, no space cards yet.

http://www.glumbert.com/media/spacecar

Damn.

Sunday, April 27, 2008

Apple Ideas

Alright, I have to write about this, if only for my own personal time-stamp. It's probably in no way worth reading.

A few years I started thinking "Why doesn't Macromedia and Adobe merge?" It seemed pretty obvious to me. Then a few years later it happened. At the time, I was also thinking that they could buy a Linux company and produce a bootable Live-CD with trials / serial activated version of their software suite. Well, that last part never happened.

Then, when the SCO case came out, I was thinking "Hey, this is totally crap. It's not going to work out for them. Too bad I can't short their stock". It was all new then, but turns out that's how it turned out. Nothing exciting there, most slashdot nerd were thinking the same thing.

I was also wishing I could buy Google stock way back when it was growing but everyone thought it was going to stop and have some kind of correction. That one would have worked out too. But alas, I'm not an investor.

Anyway, I've been reading and watching and listening to a lot of personal computer history lately, and in particular I've been reading about Commodore in On The Edge. As I'm reading this, I'm thinking "Hey, how come Apple doesn't make even more stuff in house?"

Think about it. Back in the day, making a computer was really making a computer. It lots of ROM code and chips and video circuitry and disk controllers and figuring out where things go and how they should work. Today it's basically a CPU, almost always an x86, and a chipset and you're ready to roll.

I was thinking, like Commodore bought MOS Technologies in search of vertical integration, why doesn't Apple buy some chip company?

I just about lost my mind just now when I happened to catch that Apple has done exactly that.

So, from now on, I'm going to post all my crazy little ideas of what I think could, or might, or even should happen, just so I can say, if only to myself, "See, I saw that one coming."

Also, just for the record, and I have little hope, proof, or even supporting ideas, but I think Apple could get into the car market, as in building embedded systems for cars. Don't ask me why. I have no clue. I just see Apple in cars. The way Microsoft thought it would but never did.

A few years I started thinking "Why doesn't Macromedia and Adobe merge?" It seemed pretty obvious to me. Then a few years later it happened. At the time, I was also thinking that they could buy a Linux company and produce a bootable Live-CD with trials / serial activated version of their software suite. Well, that last part never happened.

Then, when the SCO case came out, I was thinking "Hey, this is totally crap. It's not going to work out for them. Too bad I can't short their stock". It was all new then, but turns out that's how it turned out. Nothing exciting there, most slashdot nerd were thinking the same thing.

I was also wishing I could buy Google stock way back when it was growing but everyone thought it was going to stop and have some kind of correction. That one would have worked out too. But alas, I'm not an investor.

Anyway, I've been reading and watching and listening to a lot of personal computer history lately, and in particular I've been reading about Commodore in On The Edge. As I'm reading this, I'm thinking "Hey, how come Apple doesn't make even more stuff in house?"

Think about it. Back in the day, making a computer was really making a computer. It lots of ROM code and chips and video circuitry and disk controllers and figuring out where things go and how they should work. Today it's basically a CPU, almost always an x86, and a chipset and you're ready to roll.

I was thinking, like Commodore bought MOS Technologies in search of vertical integration, why doesn't Apple buy some chip company?

I just about lost my mind just now when I happened to catch that Apple has done exactly that.

So, from now on, I'm going to post all my crazy little ideas of what I think could, or might, or even should happen, just so I can say, if only to myself, "See, I saw that one coming."

Also, just for the record, and I have little hope, proof, or even supporting ideas, but I think Apple could get into the car market, as in building embedded systems for cars. Don't ask me why. I have no clue. I just see Apple in cars. The way Microsoft thought it would but never did.

Wednesday, April 23, 2008

Living Frugal Isn't very Rock and Roll

Being rich isn't cool. Spending money like you don't care is cool.

What's cool about being cheap? There's no payoff for bystanders. When someone is totally rich, drives a beat-up old car, shops at thrift stores, buys and sells things used on action sites and classified, and generally doesn't go out throwing lavish parties or having opulent dinners, then anyone else who's standing on the bylines isn't getting one very cool payoff. If they themselves aren't wealthy, it's always at least a little fun to watch and live vicariously through someone else's over-top-escapades.

The interesting effect of this is that there's a social reward feedback loop that takes place when someone within a social groups starts earning what they perceive to be as a lot of money. You get to be a little famous for being Mr. Moneybags.

The payoff is even higher if you do silly and outrageously unnecessary things. This is the essence of 'bling'.

So if someone is in a peer group of people who don't come from a history of money, and that person then makes a lot of money, they are highly likely to take a few splurges here and there, which get the attention of others, creates some social validation, and re-enforces the entire cycle until the wealthy person is wealthy no longer.

You can see this statistic that the average lottery winner goes back to having a job and roughly similar lives to their previous ones. All in about 2 years. [ref 1 needed]

Anyone who's ever got a big raise at work knows that, when you do the taxes and deductions, it never ends up feeling like a who lot when you get your next paycheck. But it's not that numbers that ends up driving the purchases. It's the perception of wealth of whatever their current salary and position are dictating that drives this. It's probably different for everyone.

But the net effect is the same. Saving money just isn't cool. It definitely isn't Rock Star livin'. Just ask Willy Nelson.

http://moneycentral.msn.com/content/SavingandDebt/P75072.asp

What's cool about being cheap? There's no payoff for bystanders. When someone is totally rich, drives a beat-up old car, shops at thrift stores, buys and sells things used on action sites and classified, and generally doesn't go out throwing lavish parties or having opulent dinners, then anyone else who's standing on the bylines isn't getting one very cool payoff. If they themselves aren't wealthy, it's always at least a little fun to watch and live vicariously through someone else's over-top-escapades.

The interesting effect of this is that there's a social reward feedback loop that takes place when someone within a social groups starts earning what they perceive to be as a lot of money. You get to be a little famous for being Mr. Moneybags.

The payoff is even higher if you do silly and outrageously unnecessary things. This is the essence of 'bling'.

So if someone is in a peer group of people who don't come from a history of money, and that person then makes a lot of money, they are highly likely to take a few splurges here and there, which get the attention of others, creates some social validation, and re-enforces the entire cycle until the wealthy person is wealthy no longer.

You can see this statistic that the average lottery winner goes back to having a job and roughly similar lives to their previous ones. All in about 2 years. [ref 1 needed]

Anyone who's ever got a big raise at work knows that, when you do the taxes and deductions, it never ends up feeling like a who lot when you get your next paycheck. But it's not that numbers that ends up driving the purchases. It's the perception of wealth of whatever their current salary and position are dictating that drives this. It's probably different for everyone.

But the net effect is the same. Saving money just isn't cool. It definitely isn't Rock Star livin'. Just ask Willy Nelson.

http://moneycentral.msn.com/content/SavingandDebt/P75072.asp

Saturday, April 19, 2008

LCD vs. CRT vs. Trees vs. Michael Bay?

Anyone browsing craigslist recently would have probably noticed something in the technology section. There are tons and tons of free CRT monitors unable to find a home.

I don't know what the environmental harm this mass exodus of the mighty CRTs from our businesses and homes will be, but it'll probably be bad. In fact, one could figure out the 'environmental-return-on-investment' or EROI, by calculating some index of environmental harm, and comparing it to the environmental benefits of the power saving of LCD's.

It's funny to think that something that solves one problem, power-hungry CRTs burning up energy, creates another, CRT landfill, and that at some point in the future enough time will have passed such that the second problem will be exhausted (the damage from CRTs) while the benefits of the first will have aggregated over time. I for one do **not** volunteer.

However, what we can do is measure the power differences! Is it science? Who cares, it's fun! So I whipped out my Kill-o-Watt and did some measurements.

It turns out that, the way you use **both** changes the power usage. Here's the raw data:

- CRT Monitor -

All White Screen: 90 watts

All Black Screen: 71 watts

Average: 80.5 watts

- LCD Monitor -

All White Screen: 28 watts

All Black Screen: 29 watts

Average: 28.5 watts

- Some interesting numbers -

The CRT uses 27% more power displaying an all white vs. all black screen

The LCD uses 4% less power displaying an all white vs. all black screen

The LCD uses 35% less power, on average, overall of a CRT

It turns out that, as no surprise, a CRT monitor displaying nothing but black uses less power then when the screen is all white. This is because the gun firing at the screen is doing mostly nothing when drawing black. When the screen is white, the gun is firing all the time, in order to light up the whole screen, and so you get 20 more watts of power drain.

This is pretty interesting, since we usually don't think about **how** we use something as affecting the environment, especially when it comes to technology. If you have a CRT monitor, and a really bright and mostly white screensaver and desktop wallpaper, you're using up as much power and money, and doing as much damage as possible.

However, I didn't think that LCDs would register a noticeable difference, never mind the opposite effect! But it makes sense. With an LCD screen, the bulb inside is running all the time, regardless of what we are doing. However, the screen itself acts like a series of small, electronic sunglasses, turning on completely to block out all the light and create a black pixel, and turning off completely letting the light through and creating a white pixel.

It may only be 1 watt of difference, but it's interesting just the same. That means, if your screensaver, desktop wallpaper, and daily activities are black or mostly dark, then your maximizing the power, money and adverse environmental effects of your monitor.

But clearly, the LCDs use so much less power, you're still better off going LCD, for your power, money and environmental karma.

But here's what I really want to know:

What did Google's all white homepage cost the environment in carbon emissions back when CRTs were the common monitor?

What does Windows XP's default mostly black screensaver cost the environment in carbon emissions now that LCDs are the common monitor?

How many trees cry every time a Michael Bay movie explosion whites out a screen on a home theater system?

Friday, April 18, 2008

Old Computers

First of all, I love computers. New and old. Technology is great. Sign me up and plug me in!

I was talking to my brother recently about old computers and what it is I like about them.

There are a lot of things about old computers that are great. Just tonight I realized that you could actually have a fairly complete understanding of a 1980's personal computer from top to bottom. From the logic gates, to the CPU, whether it's a Z80, MOS 6802, or Intel 8086, through to the operating system, and right up into the program. Head to toes.

Today when you take a computer science course, you learn little pieces here and there, a logic gate, or the ideas of a low level programming language, often in the form of a emulated CPU, or maybe a fictional CPU designed for learning. Maybe you even build a binary adder. But then it's back to learning something useful Java or C# or something.

Maybe I'm wrong, it's not like I'm in university right now, however, it seems like learning some of these basic things by making a logic gate or adder or something, feel like growing a single blade of grass as starting point of learning football. So far and away.

It seems like making computers do things is mostly a case of taking an x86 based CPU, some fully realized OS, a byte code based virtual machine, a whole wack of libraries and API's, a editor and IDE environment with a full knowledge of the API's, and boom - you finally start making something.

This makes sense as it evolved out of needs to encapsulate layers of very annoying details away so that it's easier to create and maintain your project. Or perhaps your piece of the project.

I think this is all great, really I do. Ruby on Rails is really quite awesome!

It all seems like so much. So many layers, and pieces, all of which are moving targets, and all of which need constant refreshing. It seems like we went from swimming in different, but well known ponds - Apple, Commodore, IBM, DOS, CP/M - to one great big ocean. It's just overwhelming to understand, and sometimes underwhelming to explore. Things got more complex and more homogeneous.

Using computers today seems so much like watching TV. The web pages turned into databases which turned into web apps which turned into social web apps which turned into 1-click perpetual payoff. Meanwhile, desktop apps are more like web pages.

But I think the experience of using computers today has lost something else.

You see, the GUI interface changes the very idea of what using a computer should be.

Let's take a side trip. Imagine your living millions of year ago in a thriving village, and things are so well, that there's lots of food and no worry of war or scary animals. No imagine your belly is full and looking out into the wilderness. What would you be thinking?

Now imagine your living in a modern city today, and you sitting at a restaurant, after eating, and looking at a desert menu. What you are thinking now?

When the GUI first come out on the scene, it offered a very friendly way of using a computer. Instead of having to know a bunch of stuff, you are presented with a list. All the functions and programs of the computer are laid out in a nice little menu, complete with pictures.

What this is saying to the user is "We've figured everything out for you, and here's all the things you can do. Wouldn't you like to pick something to do? We've made a nice menu for you to choose from."

With the advent of the modern internet, that desert menu is pretty damn big. So big, in fact, that you can spend an almost indefinite period of time getting entertained by it.

But way back in the early days of the personal computer, this is what it was like.

You got a prompt. A flashing green cursor.

You don't get to pick from a list of things to do. You can do anything you want. You can do anything.

There's no list. There's no waitress walking you pleasantly through glossy catalog of choices.

You're faced with your own two hands and ability to explore and create. That flashing green cursor is sitting there waiting for you to go, or do, or be anything you want it, or yourself to be.

It's a canvas, not a catalog.

I realize that most people know what they want, and they like it when someone else has figured it all out for them. What I wonder about is the kids.

They get blasted into the ocean full of high speed internet whiz bang, instead of sitting on the shore, wondering what's out there, and maybe making their own raft.

I was talking to my brother recently about old computers and what it is I like about them.

There are a lot of things about old computers that are great. Just tonight I realized that you could actually have a fairly complete understanding of a 1980's personal computer from top to bottom. From the logic gates, to the CPU, whether it's a Z80, MOS 6802, or Intel 8086, through to the operating system, and right up into the program. Head to toes.

Today when you take a computer science course, you learn little pieces here and there, a logic gate, or the ideas of a low level programming language, often in the form of a emulated CPU, or maybe a fictional CPU designed for learning. Maybe you even build a binary adder. But then it's back to learning something useful Java or C# or something.

Maybe I'm wrong, it's not like I'm in university right now, however, it seems like learning some of these basic things by making a logic gate or adder or something, feel like growing a single blade of grass as starting point of learning football. So far and away.

It seems like making computers do things is mostly a case of taking an x86 based CPU, some fully realized OS, a byte code based virtual machine, a whole wack of libraries and API's, a editor and IDE environment with a full knowledge of the API's, and boom - you finally start making something.

This makes sense as it evolved out of needs to encapsulate layers of very annoying details away so that it's easier to create and maintain your project. Or perhaps your piece of the project.

I think this is all great, really I do. Ruby on Rails is really quite awesome!

It all seems like so much. So many layers, and pieces, all of which are moving targets, and all of which need constant refreshing. It seems like we went from swimming in different, but well known ponds - Apple, Commodore, IBM, DOS, CP/M - to one great big ocean. It's just overwhelming to understand, and sometimes underwhelming to explore. Things got more complex and more homogeneous.

Using computers today seems so much like watching TV. The web pages turned into databases which turned into web apps which turned into social web apps which turned into 1-click perpetual payoff. Meanwhile, desktop apps are more like web pages.

But I think the experience of using computers today has lost something else.

You see, the GUI interface changes the very idea of what using a computer should be.

Let's take a side trip. Imagine your living millions of year ago in a thriving village, and things are so well, that there's lots of food and no worry of war or scary animals. No imagine your belly is full and looking out into the wilderness. What would you be thinking?

Now imagine your living in a modern city today, and you sitting at a restaurant, after eating, and looking at a desert menu. What you are thinking now?

When the GUI first come out on the scene, it offered a very friendly way of using a computer. Instead of having to know a bunch of stuff, you are presented with a list. All the functions and programs of the computer are laid out in a nice little menu, complete with pictures.

What this is saying to the user is "We've figured everything out for you, and here's all the things you can do. Wouldn't you like to pick something to do? We've made a nice menu for you to choose from."

With the advent of the modern internet, that desert menu is pretty damn big. So big, in fact, that you can spend an almost indefinite period of time getting entertained by it.

But way back in the early days of the personal computer, this is what it was like.

You got a prompt. A flashing green cursor.

You don't get to pick from a list of things to do. You can do anything you want. You can do anything.

There's no list. There's no waitress walking you pleasantly through glossy catalog of choices.

You're faced with your own two hands and ability to explore and create. That flashing green cursor is sitting there waiting for you to go, or do, or be anything you want it, or yourself to be.

It's a canvas, not a catalog.

I realize that most people know what they want, and they like it when someone else has figured it all out for them. What I wonder about is the kids.

They get blasted into the ocean full of high speed internet whiz bang, instead of sitting on the shore, wondering what's out there, and maybe making their own raft.

Subscribe to:

Comments (Atom)